Anti-Surveillance Clothing Aims to Hide Wearers from Facial Recognition

WHISTLEBLOWING - SURVEILLANCE, 9 Jan 2017

Hyperface project involves printing patterns on to clothing or textiles that computers interpret as a face, in fightback against intrusive technology.

An image of a Hyperface pattern, specifically created to contain thousands of facial recognition hits. Photograph: Adam Harvey

4 Jan 2017 – The use of facial recognition software for commercial purposes is becoming more common, but, as Amazon scans faces in its physical shop and Facebook searches photos of users to add tags to, those concerned about their privacy are fighting back.

Berlin-based artist and technologist Adam Harvey aims to overwhelm and confuse these systems by presenting them with thousands of false hits so they can’t tell which faces are real.

The Hyperface project involves printing patterns on to clothing or textiles, which then appear to have eyes, mouths and other features that a computer can interpret as a face.

This is not the first time Harvey has tried to confuse facial recognition software. During a previous project, CV Dazzle, he attempted to create an aesthetic of makeup and hairstyling that would cause machines to be unable to detect a face.

Speaking at the Chaos Communications Congress hacking conference in Hamburg, Harvey said: “As I’ve looked at in an earlier project, you can change the way you appear, but, in camouflage you can think of the figure and the ground relationship. There’s also an opportunity to modify the ‘ground’, the things that appear next to you, around you, and that can also modify the computer vision confidence score.”

Harvey’s Hyperface project aims to do just that, he says, “overloading an algorithm with what it wants, oversaturating an area with faces to divert the gaze of the computer vision algorithm.”

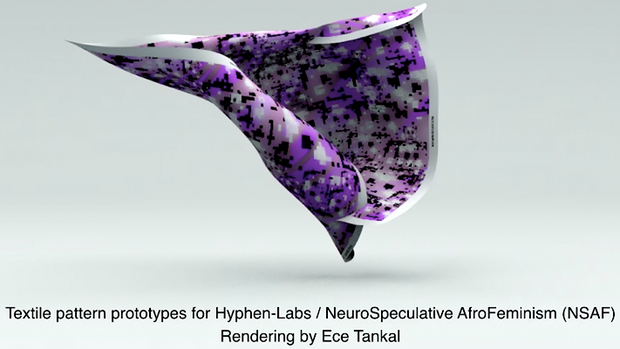

The resultant patterns, which Harvey created in conjunction with international interaction studio Hyphen-Labs, can be worn or used to blanket an area. “It can be used to modify the environment around you, whether it’s someone next to you, whether you’re wearing it, maybe around your head or in a new way.”

Explaining his hopes for how technologies like his would affect the world, Harvey showed an image of a street scene from the 1910s, pointing out that every figure in it is wearing a hat. “In 100 years from now, we’re going to have a similar transformation of fashion and the way that we appear. What will that look like? Hopefully it will look like something that appears to optimise our personal privacy.”

To emphasise the extent to which facial recognition technology changes expectations of privacy, Harvey collated 47 different data points commercial and academic researchers claim to be able to discover from a 100×100 pixel facial image – around 2.5% of the size of a typical Instagram photo. Those include traits such as “calm” or “kind”, criminal tendencies like “paedophile” or “white collar offender”, and simple demographics like “age” and “gender”.

Research from Shanghai Jiao Tong University, for instance, claims to be able to predict criminality from lip curvature, eye inner corner distance and the so-called nose-mouth angle.

“A lot of other researchers are looking at how to take that very small data and turn it into insights that can be used for marketing,” Harvey said. “What all this reminds me of is Francis Galton and eugenics. The real criminal, in these cases, are people who are perpetrating this idea, not the people who are being looked at.”

Harvey and Hyphen-Labs plan to reveal details on the Hyperface project this month, as part of Hyphen-Labs’ new work NeuroSpeculative AfroFeminism.

Go to Original – theguardian.com

DISCLAIMER: The statements, views and opinions expressed in pieces republished here are solely those of the authors and do not necessarily represent those of TMS. In accordance with title 17 U.S.C. section 107, this material is distributed without profit to those who have expressed a prior interest in receiving the included information for research and educational purposes. TMS has no affiliation whatsoever with the originator of this article nor is TMS endorsed or sponsored by the originator. “GO TO ORIGINAL” links are provided as a convenience to our readers and allow for verification of authenticity. However, as originating pages are often updated by their originating host sites, the versions posted may not match the versions our readers view when clicking the “GO TO ORIGINAL” links. This site contains copyrighted material the use of which has not always been specifically authorized by the copyright owner. We are making such material available in our efforts to advance understanding of environmental, political, human rights, economic, democracy, scientific, and social justice issues, etc. We believe this constitutes a ‘fair use’ of any such copyrighted material as provided for in section 107 of the US Copyright Law. In accordance with Title 17 U.S.C. Section 107, the material on this site is distributed without profit to those who have expressed a prior interest in receiving the included information for research and educational purposes. For more information go to: http://www.law.cornell.edu/uscode/17/107.shtml. If you wish to use copyrighted material from this site for purposes of your own that go beyond ‘fair use’, you must obtain permission from the copyright owner.

One Response to “Anti-Surveillance Clothing Aims to Hide Wearers from Facial Recognition”

Read more

Click here to go to the current weekly digest or pick another article:

WHISTLEBLOWING - SURVEILLANCE:

Great! Where can we get them????