Gabriel of Peace or Lucifer of War: Generative Artificial Intelligence

TRANSCEND MEMBERS, 20 Feb 2023

Prof Hoosen Vawda – TRANSCEND Media Service

Artificial Intelligence, a Protagonist of Global Peace or a Peace Disruptor? [1]

The Lateral view of an Actual, Adult, Human, Cadaveric Brain Specimen. Taken by the Author[2] . The average mass of the full, removed human brain in a healthy teenage individual, not exposed to smoking, alcohol and drugs is 1425grams. However, in the living individual, the brain is buoyant in the cerebrospinal fluid to effectively reduced its mass, and cushion the brain against rapid acceleration and deceleration of the head and body, during daily movements.[3]

This publication discusses the facility and attribute, of intelligence, of varying degrees, bestowed by God, when he created Adam, from base clay, as narrated in the holy scriptures of the Abrahamic faiths: Judaism, Christianity and Islam. The paper reviews evolution of intelligence, which is usually the domain and inherent property of humanoids, from antiquity, to the present ability of humanoids to create Artificial Intelligence (AI). Civilisation has now reached a state of Generative Artificial Intelligence, in an autonomous manner, based on feeding machines, with never ending data, globally. The concept of “Data is Gold” is now greatly relevant than it ever was, previously. Humanoids have actually reached a state of taking the first steps from basic artificial intelligence to evolving into a self-generative, autonomous system where The Rise of the Machines” is now imminent, to encapsulate in the words of film director, supremo, James Cameron, based on his “Terminator” franchise, which first appeared in 1984. This is immortalised by former Mr Universe, and former Governor of California, Arnold Schwarzenegger in Hollywood. Arnold Alois Schwarzenegger, born on 30th July, 1947, is an Austrian and American actor, film producer, businessman, retired professional bodybuilder and politician who served as the 38th Governor of California, between 2003 and 2011. Time magazine named Schwarzenegger one of the 100 most influential people in the world in 2004 and 2007.[4]

The Creation of Adam by Michelangelo on the ceiling of the Sistine Chapel in the Vatican. The total ceiling works took 4 years and were completed in 1512. The exact painting of this one section took 3 weeks. Pope Julius commissioned Michelangelo to paint the ceiling.[5] Note that is this famous artwork, the fingers do not touch for a specific reason. God is the supreme custodian of all knowledge, He gives access to this knowledge to humanoids in increments and in the 21st century, AI is one of these increments.

It is necessary to review the historical evolution of artificial intelligence, which is the precursor of Generative Artificial Intelligence. The history of artificial intelligence began in antiquity, with myths, stories and rumors of artificial beings endowed with intelligence or consciousness by master craftsmen. By definition, this may also include the mystics of the Eastern Hemisphere, like the Sufis,[6] who accomplished feats which defied scientific explanation, demonstrating, the age-old adage that “today’s magic is tomorrow science”.

Realistic humanoid automata were built by craftsman from every civilization, including Yan Shi, Hero of Alexandria, Al-Jazari, Pierre Jaquet-Droz, and Wolfgang von Kempelen. The oldest known automata were the sacred statues of ancient Egypt and Greece. The faithful believed that craftsman had imbued these figures with very real minds, capable of wisdom and emotion—Hermes Trismegistus wrote that “by discovering the true nature of the gods, man has been able to reproduce it”.[7] During the early modern period, these legendary automata were said to possess the magical ability to answer questions put to them. The late medieval alchemist and scholar Roger Bacon was purported to have fabricated a brazen head, having developed a legend of having been a wizard. These legends were similar to the Norse myth of the Head of Mímir. According to legend, Mímir was known for his intellect and wisdom, and was beheaded in the Æsir-Vanir War. Odin is said to have “embalmed” the head with herbs and spoke incantations over it such that Mímir’s head remained able to speak wisdom to Odin. Odin then kept the head near him for counsel.[8] In antiquity, Greek myths of Hephaestus and Pygmalion incorporated the idea of intelligent robots, such as Talos and artificial beings such as Galatea and Pandora.[9] Sacred mechanical statues built in Egypt and Greece were believed to be capable of wisdom and emotion. Hermes Trismegistus would write “they have sensus and spiritus … by discovering the true nature of the gods, man has been able to reproduce it.” Mosaic law prohibits the use of automatons in religion.[10] In 10th century BC, Yan Shi presented King Mu of Zhou with mechanical men which were capable of moving their bodies independently.[11] During the period 384 BC–322 BC, Aristotle described the syllogism, a method of formal, mechanical thought and theory of knowledge in the Organon.[12] In 3rd century BC, Ctesibius invents a mechanical water clock with an alarm. This was the first example of a feedback mechanism. 1st century Hero of Alexandria created mechanical men and other automatons.[13] In 260, Porphyry wrote Isagogê which categorized knowledge and logic.[14] Alchemical means of artificial intelligence was the foremost technology in 13th Century.

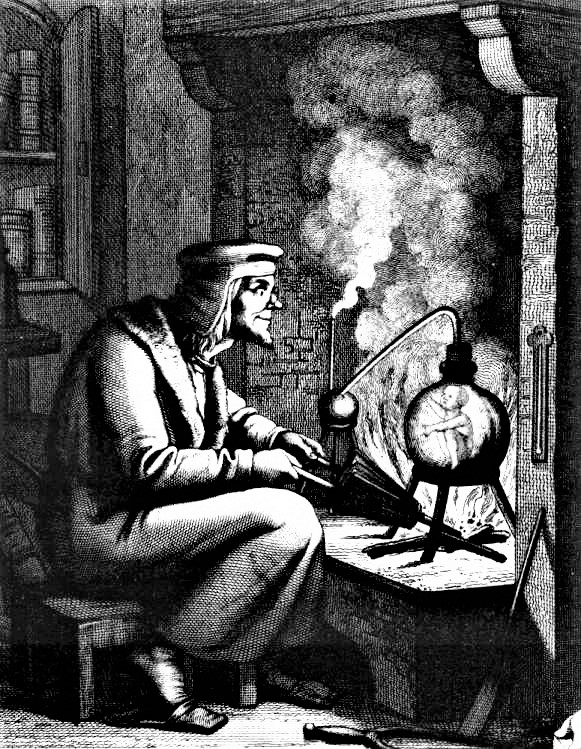

Depiction of a homunculus from Goethe’s Faust[15]

Note the “Little Man, The Homunculus” in the flask, which lead to his demise when the flask burst.

In “Of the Nature of Things”, written by the Swiss-born alchemist, Paracelsus, he describes a procedure which he claims can fabricate an “artificial man”. By placing the “sperm of a man” in horse dung, and feeding it the “Arcanum of Mans blood” after 40 days, the concoction will become a living infant. Takwin, the artificial creation of life, was a frequent topic of Ismaili alchemical manuscripts, especially those attributed to Jabir ibn Hayyan. Islamic alchemists attempted to create a broad range of life through their work, ranging from plants to animals. In Faust: The Second Part of the Tragedy by Johann Wolfgang von Goethe, an alchemically fabricated homunculus, destined to live forever in the flask in which he was made, endeavors to be born into a full human body. Upon the initiation of this transformation, however, the flask shatters and the homunculus dies. In 800 Jabir ibn Hayyan developed the Arabic alchemical theory of Takwin, the artificial creation of life in the laboratory, up to and including human life.[16] In 1206 Ismail al-Jazari created a programmable orchestra of mechanical human beings.[17] In 1275Ra, mon Llull, Spanish theologian, invents the Ars Magna, a tool for combining concepts mechanically based on an Arabic astrological tool, the Zairja. Llull described his machines as mechanical entities that could combine basic truth and facts to produce advanced knowledge. The method would be developed further by Gottfried Leibniz in the 17th century.[18] In1500 Paracelsus claimed to have created an artificial man out of magnetism, sperm and alchemy.[19] In 1580 Rabbi Judah Loew ben Bezalel of Prague is said to have invented the Golem, a clay man brought to life. Early 17th century René Descartes proposed that bodies of animals are nothing more than complex machines (but that mental phenomena are of a different “substance”). In 1620 Sir Francis Bacon developed empirical theory of knowledge and introduced inductive logic in his work Novum Organum, a play on Aristotle’s title Organon.[20] In 1623, Wilhelm Schickard drew a calculating clock on a letter to Kepler. This will be the first of five unsuccessful attempts at designing a direct entry calculating clock in the 17th century (including the designs of Tito Burattini, Samuel Morland and René Grillet). In 1641 Thomas Hobbes published Leviathan and presented a mechanical, combinatorial theory of cognition. He wrote “…for reason is nothing but reckoning”. In 1642 Blaise Pascal invented the mechanical calculator, the first digital calculating machine.[21] 1672 Gottfried Wilhelm Leibniz improved the earlier machines, making the Stepped Reckoner to do multiplication and division. He also invented the binary numeral system and envisioned a universal calculus of reasoning (alphabet of human thought) by which arguments could be decided mechanically. Leibniz worked on assigning a specific number to each and every object in the world, as a prelude to an algebraic solution to all possible problems. In 1726 Jonathan Swift published Gulliver’s Travels, which includes this description of the Engine, a machine on the island of Laputa: “a Project for improving speculative Knowledge by practical and mechanical Operations ” by using this “Contrivance”, “the most ignorant Person at a reasonable Charge, and with a little bodily Labour, may write Books in Philosophy, Poetry, Politicks, Law, Mathematicks, and Theology, with the least Assistance from Genius or study.” The machine is a parody of Ars Magna, one of the inspirations of Gottfried Wilhelm Leibniz’ mechanism. In 1750 Julien Offray de La Mettrie published L’Homme Machine, which argued that human thought is strictly mechanical. In1763 Thomas Bayes’s work An Essay towards solving a Problem in the Doctrine of Chances, published two years after his death, laid the foundations of Bayes’ theorem. In 1769 Wolfgang von Kempelen built and toured with his chess-playing automaton, The Turk, which Kempelen claimed could defeat human players. The Turk was later shown to be a hoax, involving a human chess player. In 1805 Adrien-Marie Legendre describes the “méthode des moindres carrés”, known in English as the least squares method. The least squares method is used widely in data fitting. In 1818 Mary Shelley published the story of Frankenstein; or the Modern Prometheus, a fictional consideration of the ethics of creating sentient beings.[22] Between 1822–1859 Charles Babbage & Ada Lovelace worked on programmable mechanical calculating machines. In 1837 the mathematician Bernard Bolzano made the first modern attempt to formalize semantics. In 1854 George Boole set out to “investigate the fundamental laws of those operations of the mind by which reasoning is performed, to give expression to them in the symbolic language of a calculus”, inventing Boolean algebra. In 1863 Samuel Butler suggested that Darwinian evolution also applies to machines, and speculates that they will one day become conscious and eventually supplant humanity.[23]

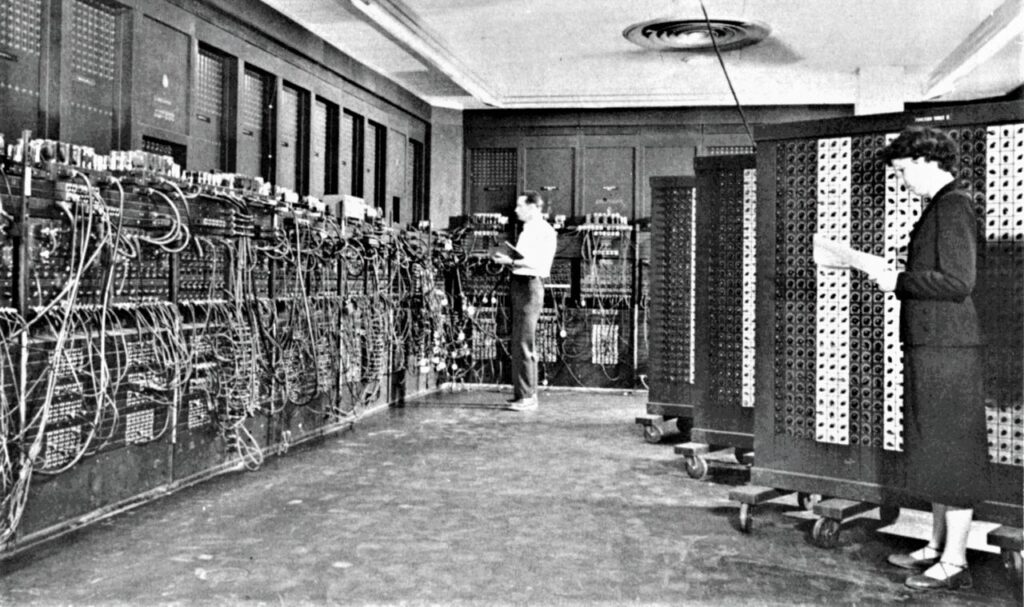

The seeds of modern AI were planted by philosophers who attempted to describe the process of human thinking as the mechanical manipulation of symbols. This work culminated in the invention of the programmable digital computer in the 1940s, a machine based on the abstract essence of mathematical reasoning. This device and the ideas behind it inspired a handful of scientists to begin seriously discussing the possibility of building an electronic brain, hence the birth of the concept of Artificial Intelligence.

ENIAC (Electronic Numerical Integrator And Computer) in Philadelphia, Pennsylvania. Glen Beck (background) and Betty Snyder (foreground) program the ENIAC in building 328 at the Ballistic Research Laboratory (BRL).[24]

The field of AI research was founded at a workshop held on the campus of Dartmouth College, USA during the summer of 1956.[25] Those who attended would become the leaders of AI research for decades. Many of them predicted that a machine as intelligent as a human being would exist in no more than a generation, and they were given millions of dollars to make this vision come true.[26]

Eventually, it became obvious that commercial developers and researchers had grossly underestimated the difficulty of the project.[27] In 1974, in response to the criticism from James Lighthill and ongoing pressure from congress, the U.S. and British Governments stopped funding undirected research into artificial intelligence, and the difficult years that followed would later be known as an “AI winter”. Seven years later, a visionary initiative by the Japanese Government inspired governments and industry to provide AI with billions of dollars, but by the late 80s the investors became disillusioned and withdrew funding again. Investment and interest in AI boomed in the first decades of the 21st century when machine learning[28] was successfully applied to many problems in academia and industry due to new methods, the application of powerful computer hardware, and the collection of immense data sets. Machine learning is a subset of artificial intelligence. It is focused on teaching computers to learn from data and to improve with experience, instead of being explicitly programmed to do so. In machine learning, algorithms are trained to find patterns and correlations in large data sets and to make the best decisions and predictions based on that analysis. Machine learning applications improve with use and become more accurate the more data they have access to. Applications of machine learning are all around us, in our homes, our shopping carts, our entertainment media, and our healthcare.[29] Machine learning, and its components of deep learning and neural networks, all fit as concentric subsets of AI. AI processes data to make decisions and predictions. Machine learning algorithms allow AI to not only process that data, but to use it to learn and get smarter, without needing any additional programming. Artificial intelligence is the parent of all the machine learning subsets beneath it. Within the first subset is machine learning; within that is deep learning, and then neural networks within that.

An artificial neural network (ANN) is modeled on the neurons in a biological brain. Artificial neurons are called nodes and are clustered together in multiple layers, operating in parallel. When an artificial neuron receives a numerical signal, it processes it and signals the other neurons connected to it. As in a human brain, neural reinforcement results in improved pattern recognition, expertise, and overall learning.[30]

This kind of machine learning is called “deep” because it includes many layers of the neural network and massive volumes of complex and disparate data. To achieve deep learning, the system engages with multiple layers in the network, extracting increasingly higher-level outputs. For example, a deep learning system that is processing nature images and looking for Gloriosa daisies will – at the first layer – recognize a plant. As it moves through the neural layers, it will then identify a flower, then a daisy, and finally a Gloriosa daisy. Examples of deep learning applications include speech recognition, image classification, and pharmaceutical analysis.

Generative AI refers to artificial intelligence algorithms that enable using existing content like text, audio files, or images to create new plausible content. In other words, it allows computers to abstract the underlying pattern related to the input, and then use that to generate similar content. It offers immense benefits such as:

- Ensuring the generation of higher quality outputs by self-learning from every set of data.

- Lowering the risks associated with a project.

- Training reinforced machine learning models to be less biased.

- Enabling depth prediction without sensors.

- Enabling localization and regionalization of content via deepfakes[31]. The 21st century’s answer to “photoshopping”, deepfakes use a form of artificial intelligence called deep learning to make images of fake events, hence the name deepfake.

- Allowing robots to comprehend more abstract concepts both in simulation and the real world.

It is important to examine the emergence of deepfake technology, which has become a significant concern for individuals and businesses. In recent years, it has become the favorite tool of fraudsters for obtaining personal information for identity-related frauds. It is necessary to understand how deepfake technology is used, and what can be done, proactively, to prevent it.

With such significant benefits, Generative AI can be used for distinct purposes:

- Identity Protection: Generative AI avatars have been used to protect the identity of interviewees in news reports about the persecution of LGBTQ people in Russia.

- Image Processing: It helps in intelligent upscaling of low-resolution images to high-resolution images.

- Film Restoration: It enhances old images and old movies by upscaling them to 4K and beyond, which generates 60 frames per second instead of 23 or less, and removes noise, adds colors and makes it sharp.

- Audio Synthesis: Generative AI can render any computer-generated voice into one that truly sounds like human voice. Even the narrator of a video is a product of generative AI.

- Healthcare: Generative AI can be employed for rendering prosthetic limbs, organic molecules, and other items from scratch when actuated through 3D printing, CRISPR, and other technologies.

- It can also enable early identification of potential malignancy to more effective treatment plans. IBM is currently using this technology to research antimicrobial peptide (AMP) to find drugs for COVID-19.

- As Generative AI makes it possible for machines to create new content effectively, it also comes with a set of limitations. Hard to Control: Some models of Generative AI like GANs are unstable as well as it is hard to control their behavior, they sometimes do not generate the expected outputs, and it’s hard to figure out why.

- Pseudo Imagination: Generative AI algorithms still need a vast amount of training data to perform tasks. GANs cannot create entirely new things. They only combine what they know in new ways.

- Security: Malicious actors can use Generative AI for deceitful purposes like scamming people, fraudulent activities, and create fake spammy news.

The Bottom Line is, in Anton LaVey’s [32], the founder of the Church of Satan, the Satanic Bible, Lucifer is portrayed as one of the four crown princes of hell, particularly that of the East, the ‘lord of the air’, and is called the bringer of light, the morning star, intellectualism, and enlightenment.[33] The evolution of Artificial Intelligence and its progeny, Generative Artificial intelligence is the concept of enlightenment, based on the intellectual evolution of the brain since the appearance, in phylogeny, of our morphological ancestors, the Homo neanderthalensis, the Neanderthals.[34] Neanderthals are an extinct species of hominids that were the closest relatives to modern human beings. They lived throughout Europe and parts of Asia from about 400,000 until about 40,000 years ago, and they were adept at hunting large, Ice Age animals.[35]

Deepfake is new technique cybercriminals are using to trick security systems. If we don’t take the necessary steps, deepfake technology may pose a significant threat to the future of identity verification. Fraudsters are becoming innovative with the techniques they use to commit crimes. Nowadays, they are using advanced technologies like artificial intelligence (AI) and machine learning to obtain personal and financial information. Fraudsters are using it and it is of great concern for identity verification service. Measures are formulated on how to spot and prevent the use of deepfake technology in general. It is an emerging menace, which will definitely cause peace disruption in the hands of vindictive organisations and governments, in the future. While deepfake technology has many positive uses, it has gained widespread attention for its applications in fake news, hoaxes, celebrity pornography, and identity theft. As politicians, celebrities, and other high-profile personalities, have a lot of pictures, and videos over the internet for public viewing, they make the easy target for deepfake. Unfortunately, in recent times, fraudsters have started using this technique for identity-related frauds as well. As mentioned above, deepfake technology has a lot of positive applications. For example, it can help filmmakers, and 3D artists alter video transcripts and create amazing pieces of art. Moreover, the technology can also be used for educational purposes. Sadly, we see its uses in creating fake content and for nefarious purposes increasing exponentially. According to an article published in Tech Times, a large number of deepfake videos were found online between 2018 to 2019 (84% increase in less than a year).

In 2019, an unusual cybercrime made the headlines all across the globe. Fraudsters fooled a company into a massive wire transfer using AI-powered deepfake. Cybercriminals mimicked the CEO’s voice to fool his company into transmitting $243,000 to their bank account. It indicates that deepfake technology can be used for different types of fraud.

Hence, AI and its progenies including Generative AI, are operating in a bipolar mode of functionality. While most of AI is operating with goodness for humanity following the tenets of Arch Angel Gabriel, in the Biblical context, the subscription to the footsteps of the fallen Angel, Lucifer, are also being demonstrated by protagonists of evil peace disruptors, globally. Humanoids, often share videos and pictures on social media platforms like Instagram and Facebook. Fraudsters take full advantage of these freely available files and can use them to deepfake and then access our personal information. Deepfake technology is improving exponentially in the past six months, therefore, miscreants can easily use it to commit identity theft, opening fake accounts, and taking over accounts or even initiate a nuclear attack. Lucifer will definitely have his day.

Views of The famous “Terminator Forearm”, a legacy of the James Cameron[36] Terminator Franchise. Artificial intelligence has endowed us with limitless possibilities. From prosthetics, intelligent marketing to fraud prevention and 24/7 customer support, artificial intelligence has transformed every aspect of businesses and lives. Today, it can also enable machines to use textual or visual data to create new content via what we can refer to as Generative AI.[37]

References:

[1] Personal quote by the author, February 2023.

[2] Lateral view of an embalmed, cadaveric, Human Brain Specimen taken by the author 2006

[3] Personal research for a PhD by the author. University of the Witwatersrand, Johannesburg, South Africa. 1995

[4] https://www.bing.com/search?q=Arnold%20Alois%20Schwarzenegger&form=IPRV10

[5] https://www.bing.com/ck/a?!&&p=82a08d7e84357616JmltdHM9MTY3NjA3MzYwMCZpZ3VpZD0zZjE2MGRmOC05OTA4LTY4NzYtMjUwYi0xZjZmOThmNTY5Y2UmaW5zaWQ9NTE5Mg&ptn=3&hsh=3&fclid=3f160df8-9908-6876-250b-1f6f98f569ce&psq=the+creation+of+adam+full+picture&u=a1aHR0cHM6Ly93d3cubWljaGVsYW5nZWxvLm9yZy90aGUtY3JlYXRpb24tb2YtYWRhbS5qc3A&ntb=1

[6] https://www.psychicsdirectory.com/articles/sufis-differ-muslims/#:~:text=Sufism%20is%20a%20religious%20order%20that%20can%20exist,that%20are%20formed%20around%20a%20wali%2C%20or%20grandmasters.

[7] https://en.wikipedia.org/wiki/History_of_artificial_intelligence#CITEREFNewquist1994

[8] https://en.wikipedia.org/wiki/History_of_artificial_intelligence#CITEREFNewquist1994:~:text=Hollander%2C%20Lee%20M.%20(1964).%20Heimskringla%3B%20history%20of%20the%20kings%20of%20Norway.%20Austin%3A%20Published%20for%20the%20American%2DScandinavian%20Foundation%20by%20the%20University%20of%20Texas%20Press.%20ISBN%C2%A00%2D292%2D73061%2D6.%20OCLC%C2%A0638953.

[9] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#:~:text=McCorduck%202004%2C%20pp.%C2%A04%E2%80%935

[10] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#CITEREFMcCorduck2004

[11] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#CITEREFNeedham!_1986

[12] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#:~:text=Giles%2C%20Timothy%20(2016).%20%22Aristotle%20Writing%20Science%3A%20An%20Application%20of%20His%20Theory%22.%20Journal%20of%20Technical%20Writing%20and%20Communication.%2046%3A%2083%E2%80%93104.%20doi%3A10.1177/0047281615600633.%20S2CID%C2%A0170906960

[13] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#CITEREFMcCorduck2004

[14] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#CITEREFRussellNorvig2003

[15] https://upload.wikimedia.org/wikipedia/commons/thumb/3/37/Homunculus_Faust.jpg/255px-Homunculus_Faust.jpg

[16] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#:~:text=O%27Connor%2C%20Kathleen%20Malone%20(1994)%2C%20The%20alchemical%20creation%20of%20life%20(takwin)%20and%20other%20concepts%20of%20Genesis%20in%20medieval%20Islam%2C%20University%20of%20Pennsylvania%2C%20pp.%C2%A01%E2%80%93435%2C%20retrieved%2010%20January%202007.

[17] http://www.shef.ac.uk/marcoms/eview/articles58/robot.html

[18] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#CITEREFMcCorduck2004

[19] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#CITEREFMcCorduck

[20] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#:~:text=Sir%20Francis%20Bacon%20(2000).%20Francis%20Bacon%3A%20The%20New%20Organon%20(Cambridge%20Texts%20in%20the%20History%20of%20Philosophy).%20Cambridge%20University%20Press.

[21] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#CITEREFMcCorduck2004

[22] https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence#CITEREFMcCorduck2004

[23] https://en.wikipedia.org/wiki/Project_Gutenberg

[24] https://upload.wikimedia.org/wikipedia/commons/thumb/4/4e/Eniac.jpg/1280px-Eniac.jpg

[25] https://en.wikipedia.org/wiki/History_of_artificial_intelligence#:~:text=Kaplan%2C%20Andreas%3B%20Haenlein%2C%20Michael%20(2019).%20%22Siri%2C%20Siri%2C%20in%20my%20hand%3A%20Who%27s%20the%20fairest%20in%20the%20land%3F%20On%20the%20interpretations%2C%20illustrations%2C%20and%20implications%20of%20artificial%20intelligence%22.%20Business%20Horizons.%2062%3A%2015%E2%80%9325.%20doi%3A10.1016/j.bushor.2018.08.004.%20S2CID%C2%A0158433736.

[26] https://en.wikipedia.org/wiki/History_of_artificial_intelligence#CITEREFNewquist1994

[27] https://en.wikipedia.org/wiki/History_of_artificial_intelligence#CITEREFNewquist1994

[28] https://www.bing.com/search?q=what+is+machine+learning+definition&cvid=2f45124b2a2f4073b8d15a8d4927213a&aqs=edge.2.0l9j69i11004.15343j0j1&pglt=41&FORM=ANNAB1&PC=U531#:~:text=NOUN-,the%20use%20and%20development%20of%20computer%20systems%20that%20are%20able%20to%20learn%20and%20adapt%20without%20following%20explicit%20instructions%2C%20by%20using%20algorithms%20and%20statistical%20models%20to%20analyse%20and%20draw%20inferences%20from%20patterns%20in%20data%3A,-%22the%20application%20of

[29] https://www.sap.com/insights/what-is-machine-learning.html#:~:text=Machine%20learning%20is%20a%20subset%20of%20artificial%20intelligence%20(AI).%20It,homes%2C%20our%20shopping%20carts%2C%20our%20entertainment%20media%2C%20and%20our%20healthcare.

[30] https://www.sap.com/insights/what-is-machine-learning.html

[31] https://www.bing.com/search?q=what+is+deep+fake+technology&cvid=e2db6361cc0944c098149d2cb9ef2e71&aqs=edge.1.0l9j69i11004.10240j0j1&pglt=41&FORM=ANNAB1&PC=U531

[32] https://www.bing.com/ck/a?!&&p=98134befaa52af23JmltdHM9MTY3NTQ2ODgwMCZpZ3VpZD0zZjE2MGRmOC05OTA4LTY4NzYtMjUwYi0xZjZmOThmNTY5Y2UmaW5zaWQ9NTIxOQ&ptn=3&hsh=3&fclid=3f160df8-9908-6876-250b-1f6f98f569ce&psq=Anton+LaVey%27s&u=a1aHR0cHM6Ly9lbi53aWtpcGVkaWEub3JnL3dpa2kvQW50b25fTGFWZXk&ntb=1

[33] LaVey, Anton Szandor (1969). “The Book of Lucifer: The Enlightenment”. The Satanic Bible. New York: Avon. ISBN 978-0-380-01539-9.

[34] https://www.bing.com/search?q=who+were+the+neanderthal+people&qs=SSA&pq=who+are+the+neadnderthals&sk=SC4SSA1&sc=6-25&cvid=6EB0DE9B567A41D6BEF506101CA2172F&FORM=QBRE&sp=6#:~:text=DNA%2C%20%26%20Facts%20%7C%20Britannica-,https%3A//www.britannica.com/topic/Neanderthal,-Web

[35] https://www.bing.com/ck/a?!&&p=290c56d1d0f1b99bJmltdHM9MTY3NTQ2ODgwMCZpZ3VpZD0zZjE2MGRmOC05OTA4LTY4NzYtMjUwYi0xZjZmOThmNTY5Y2UmaW5zaWQ9NTE5Ng&ptn=3&hsh=3&fclid=3f160df8-9908-6876-250b-1f6f98f569ce&psq=neanderthal+man&u=a1aHR0cHM6Ly9lbi53aWtpcGVkaWEub3JnL3dpa2kvTmVhbmRlcnRoYWw&ntb=1

[36] https://www.bing.com/images/search?view=detailV2&ccid=DR3RBASx&id=03AF230F150C9015DFDE8D8EA5F5E2CD751F4B02&thid=OIP.DR3RBASxlJUZ1P9ltiMBGgHaFH&mediaurl=https%3a%2f%2fcbsnews1.cbsistatic.com%2fhub%2fi%2fr%2f2014%2f10%2f24%2f1439ed34-465b-4d6e-bc48-9b700458778e%2fresize%2f1240x930%2f023870a7b3d8dbb04f763d9688fc4c08%2fjamescameron1.jpg&cdnurl=https%3a%2f%2fth.bing.com%2fth%2fid%2fR.0d1dd10404b1949519d4ff65b623011a%3frik%3dAksfdc3i9aWOjQ%26pid%3dImgRaw%26r%3d0&exph=858&expw=1240&q=James+Cameron+Terminator+Forearm&simid=608038456281352017&FORM=IRPRST&ck=1FFC7EB32FEA6FF7C4AE5A4B86EAE4FC&selectedIndex=16&ajaxhist=0&ajaxserp=0

[37] https://www.analyticsinsight.net/wp-content/uploads/2021/01/GAI.jpg

______________________________________________

Professor G. Hoosen M. Vawda (Bsc; MBChB; PhD.Wits) is a member of the TRANSCEND Network for Peace Development Environment.

Professor G. Hoosen M. Vawda (Bsc; MBChB; PhD.Wits) is a member of the TRANSCEND Network for Peace Development Environment.

Director: Glastonbury Medical Research Centre; Community Health and Indigent Programme Services; Body Donor Foundation SA.

Principal Investigator: Multinational Clinical Trials

Consultant: Medical and General Research Ethics; Internal Medicine and Clinical Psychiatry:UKZN, Nelson R. Mandela School of Medicine

Executive Member: Inter Religious Council KZN SA

Public Liaison: Medical Misadventures

Activism: Justice for All

Email: vawda@ukzn.ac.za

Tags: Artificial Intelligence AI, Education for Peace, Peace Culture, Peace Technology

This article originally appeared on Transcend Media Service (TMS) on 20 Feb 2023.

Anticopyright: Editorials and articles originated on TMS may be freely reprinted, disseminated, translated and used as background material, provided an acknowledgement and link to the source, TMS: Gabriel of Peace or Lucifer of War: Generative Artificial Intelligence, is included. Thank you.

If you enjoyed this article, please donate to TMS to join the growing list of TMS Supporters.

This work is licensed under a CC BY-NC 4.0 License.